version 1.7a

Poly 1.7a

16.8 Mb

New features

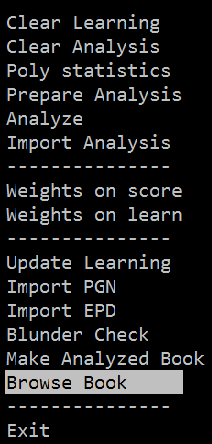

- Make Analyzed Book - Create a new and fully analyzed Polyglot opening book from a PGN collection.

- Blunder Check - Opening books contain errors, the new Blunder Check option filters them out.

- Browse Book - Quickly check the changes you made and if the weights / scores of critical positions are in harmony.

- Stockfish Analysis - has been replaced with MEA analysis which allows you to use any UCI engine, even Lc0 and co.

- From the ground up - Tour du Poly, a step by step introduction course how to use POLY effectively.

_____________________________________________________________________________________________________

Book Analysis

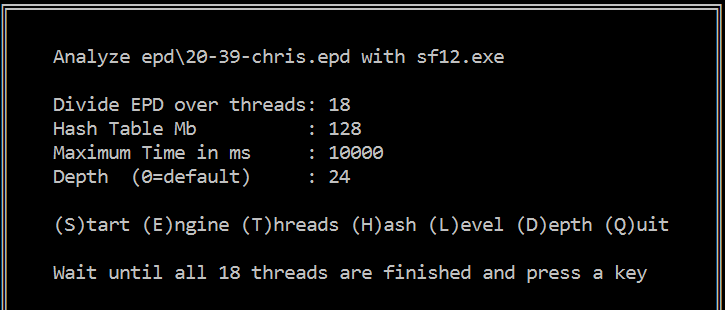

The old Stockfish Analysis has been replaced with MEA analysis which allows you to use any UCI engine, even Lc0 and friends. The old Stockfish Analysis runs via its bench function and does not always returns reliable scores and secondly with Stockfish 12 we noticed the engine switches after each position from NORMAL evaluation to NNUE evaluation which makes scores even more unreliable. MEA does not have this kind of issues and produces reliable NNUE scores for Stockfish 12. Not much has changed in the operation, see the following example analyzing the ProDeo book with Stockfish 12.

Part of the ProDeo book (moves 10-20 in this case) is analyzed with Stockfish 12 at depth 24 with a maximum time of 10 seconds per position.

Command keys:

e - Select Engine

t - Select the number of Threads

l - Type maximum time in milliseconds

d - Type depth (a value of 0 ignores the option)

s - Start the Analysis

_____________________________________________________________________________________________________

Blunder Check

Opening books contain errors, once you have an analyzed polyglot book the new Blunder Check option allows you to filter many out. Imagine the following hypothetical case of the start position that is analyzed and stored in the book as follows:

Move | Weight | Score |

1. e4 | 40% | 0.36 |

1. d4 | 30% | 0.32 |

1. c4 | 10% | 0.12 |

1. Nf3 | 10% | 0.10 |

1. b4 | 5% | 0.01 |

1. g4 | 5% | -0.20 |

The default margin of the Blunder Check is 25 centi pawns and if you apply this value POLY will look for the highest score (0.36) and the weights of moves <= 11 (36-25) are zeroed and thus are never played. In this particular case 1.Nf3 | 1.b4 | 1.g4

Using a value of 10 and the book will only play 1.e4 and 1.d4

Using a valuef 50 all moves can be played except 1.g4

And so on.

____________________________________________________________________________________________________

Tour du POLY

a step by step introduction course how to use POLY effectively

Preamble:

PGN files are stored into the PGN folder

EPD files are stored into the EPD folder

Book files are stored into the BOOKS folder

To create a new fully analyzed Polyglot book from a PGN, select Make Analyzed Book, choose test.pgn and when the (above) selection screen appears press the "s" key. The analysis with Stockfish 12 will start and the end result is the test.bin book. All fully automatic. That's it.

For existing Polyglot Books we follow the same procedure but in 3 steps.

1. Prepare Analysis - From the (small) test.pgn (only 10 games) and from each found position in the book test.bin an EPD is created and collected in test.epd to be used in the next function. Actions - select the PGN and BOOK.

2. Analyze - Select the just created test.epd and on the selection screen (see picture above) press the "s" key, the analysis will start with the default settings. When all 4 threads are finished press a key and the analysis of the 4 threads are merged.

3. Import Analysis - will import the results into the test.bin book.

____________________________________________________________________________________________________

Practicing

to get familiar with POLY

1. Included in the download is kasparov.pgn which you can use to create a new opening book with Make Analyzed Book.

2. Included in the download is the book perfect2017 from SedatChess and its analyzed versions with POLY 1.7

a. perfect2017-SF12 analyzed with Stockfish 12 at depth=24 with a maximum of 10 seconds;

b. perfect2017-LC0 analyzed with Lc0 with a maximum of 5 seconds.

Try "Browse Book", "Blunder Check" and the various tuning posibilities as listed below to get familiar with the util.

To Analyze the book yourself do: Analyze --> perfect2017.epd --> (S)tart and when finished Import Analysis.

_____________________________________________________________________________________________________

Book tuning

there are 5 ways to tune an analyzed opening book

1. Weights on score - as described here.

2. Weights on WDL - as described here.

3. Blunder check - as descibed above.

4. Import from EPD - as described here.

5. Import from PGN - for instance games from tournaments or rating lists on long time control, see here.

____________________________________________________

First Results

Playing eng-eng matches

1. Applying the Blunder Check [0.25] on the perfect2017-SF12 book resulted in an elo gain of 9 elo playing 1000 x SF12 games at 40/10.

2. Using Weights on score [0.00] meaning play best moves only (moves with the highest Stockfish) score produced an elo gain of 20 elo playing 1000 x SF12 games at 40/120 (about CCRL blitz level).

Not bad at all for such a small 6.900 positions book.

___________________________________________

Book Browsing

Visually inspect the quality of your book on critical positions such as the first 5-10 opening moves, if the weights are harmonious and if the computer scores have the quality as one might expect from nowadays top engines.

For this purpose maintain a list of critical EPD positions. For demonstration purpose we created control.epd containing these 5 positions.

Start Book Browsing from the menu, select the book and there after control.epd, the start position will appear, press a key to view the next position.

Press 'q' to exit and return to the main menu.

Click on the picture to enlarge.

_____________________________________________________________________________________________________

Hints

1. To visualize Polyglot opening books you also can use Scid and even modify the weights. To see everything, the weights, scores, depths and learn values use ProDeo 3.0, see screenshot for easy browsing through the book. Another possibility is the use of the PG util.

2. Engines that don't support Polyglot books still can play from it as Fabien's Polyglot util supports it, do as follows, create an polyglot.ini file with the following information:

[PolyGlot]

EngineDir = .

EngineCommand = sf10.exe

Book = true

BookFile = ProDeo.bin

[Engine]

Hash = 128

Threads = 1

In Arena install a new engine called sf10 but with as executable polyglot.exe [!] and Stockfish 10 will play from the ProDeo book. Ain't life beautiful at times?

3. Pressing the [F12] key shows some extra possibilities of POLY, of the options [F5] is the most important one. For instance, after playing an eng-eng match it will check the PGN for suspect moves in an opening book. Using the default settings (margin -0.50 | depth=5) it will check the first 5 moves out of book for both engines and if the 4th move out of book has a score <= -0.50 it is reported, see the example of the ProDeo book versus the Cerebellum light book. Double openings are skipped.

_____________________________________________________________________________________________________

Credits

Thanks to the Stockfish team for the use of

Stockfish 12

and

for Polyglot

and

David J. Barnes for Pgn-Extract.